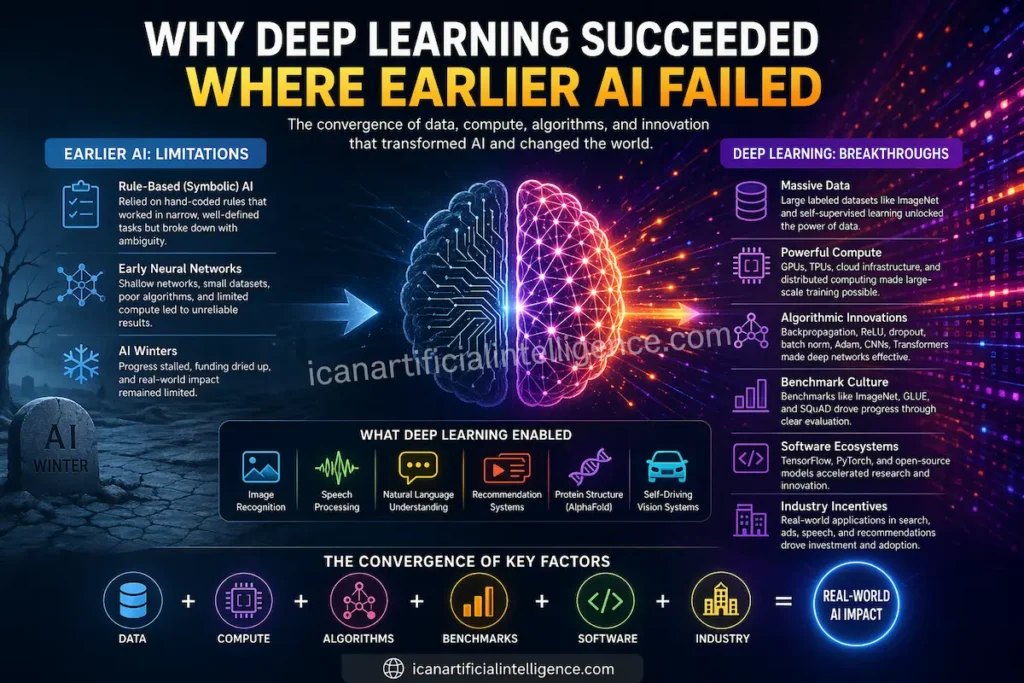

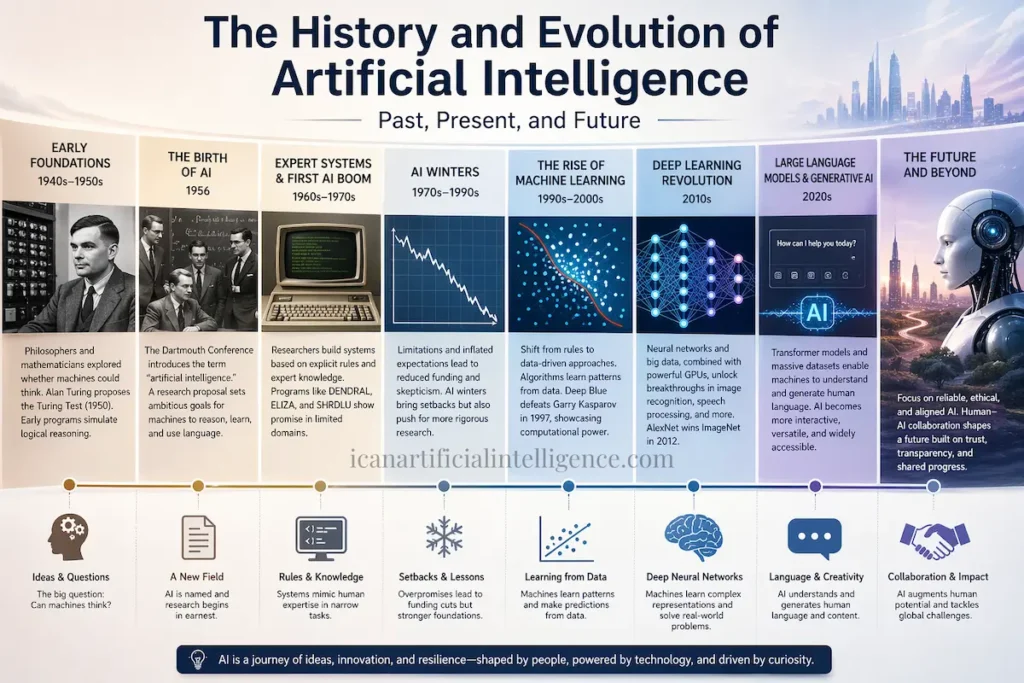

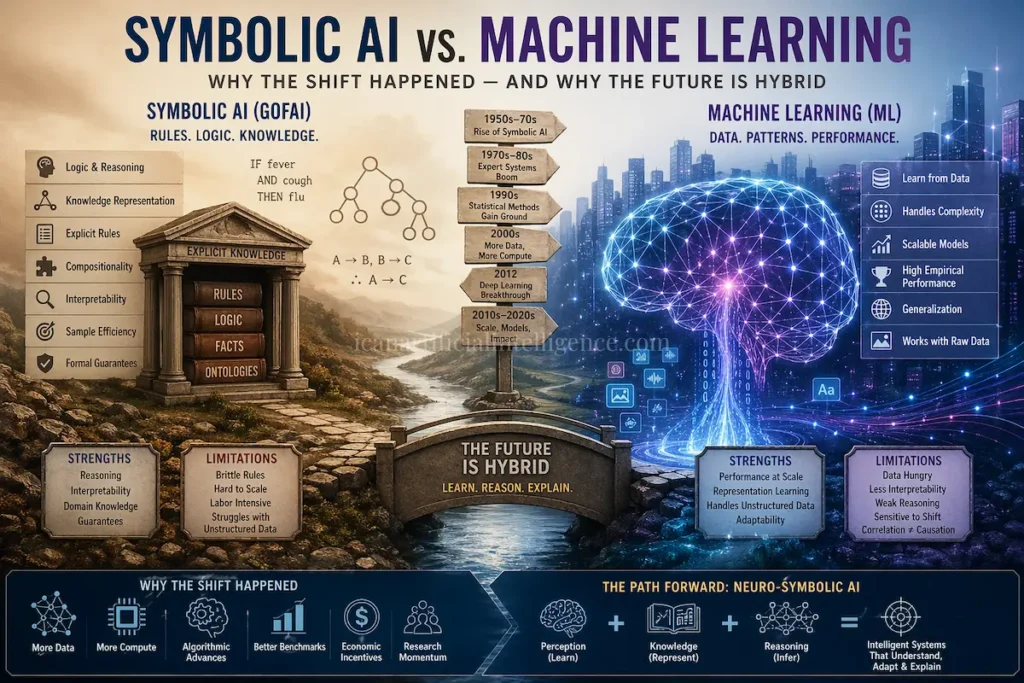

Artificial Intelligence has been around for decades, but it wasn’t always as successful as it is today. Early AI systems, including rule-based “symbolic” methods and early neural networks, often struggled to perform well in real-world situations. They worked in controlled environments but failed when faced with messy, complex data like images, audio, or informal text.

Deep learning, a modern approach to AI, changed everything. But why did it succeed where earlier AI failed? The answer lies in a combination of breakthroughs in data, compute power, algorithms, engineering practices, benchmarks, and industry support.

What is Deep Learning?

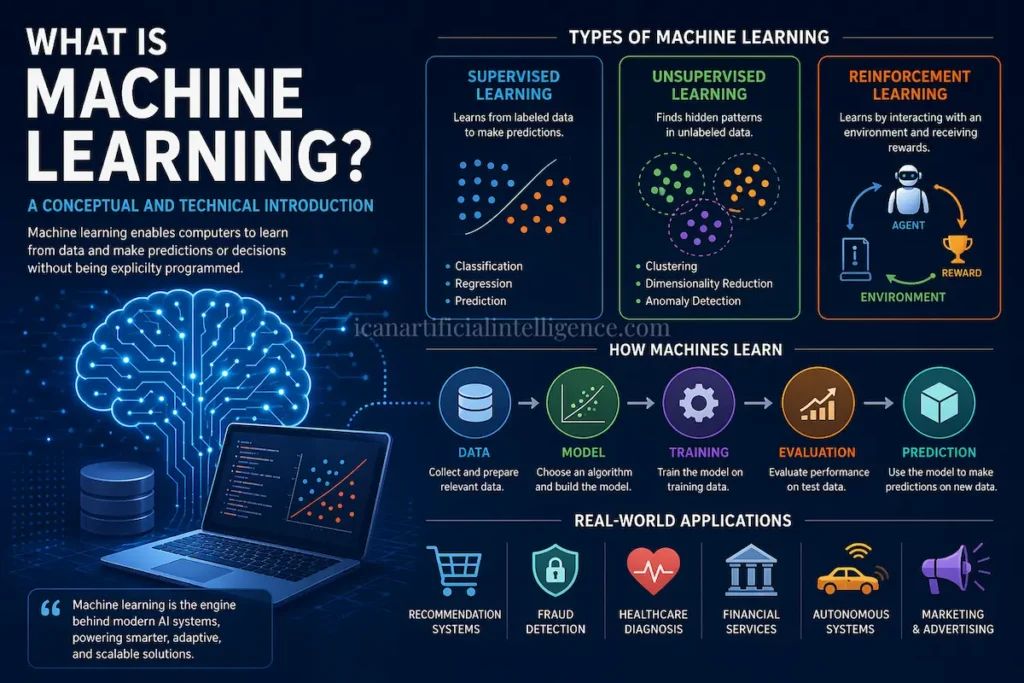

Deep learning is a type of artificial intelligence that uses artificial neural networks to learn patterns from data. Unlike traditional AI methods that rely on hand-coded rules, deep learning models automatically learn representations from large amounts of data. These models are called “deep” because they have many layers of neurons, which allow them to capture complex relationships in images, text, audio, and other types of data.

For example, a deep learning model can learn to recognize objects in pictures, understand human language, or predict the structure of proteins, simply by being trained on lots of examples.

Why Earlier AI Struggled

Symbolic AI relied on hand-coded rules. These rules worked only in narrow, well-defined tasks and often broke down when faced with ambiguity.

Early neural networks, developed between the 1950s and 1980s, were shallow and limited by small datasets, poor algorithms, and insufficient computational power. Even though backpropagation offered a way to train multi-layer networks, practical implementation was slow and unreliable. These limitations contributed to periods known as “AI winters,” when progress stalled, and funding dried up.

What Made Deep Learning Different

Deep learning’s success was not due to a single breakthrough. Instead, several factors converged at roughly the same time.

Massive Data

Large labeled datasets like ImageNet gave AI models millions of examples to learn from. This allowed neural networks to discover complex patterns in data instead of relying on hand-crafted features. Later, self-supervised learning allowed models to learn from vast amounts of unlabeled data, further improving performance.

Powerful Compute

Training deep networks requires enormous computational power. High-performance GPUs and specialized hardware like TPUs made it possible to train large models in reasonable timeframes. Distributed computing and cloud infrastructure allowed models to scale even further.

Algorithmic Innovations

Backpropagation, ReLU activation functions, dropout, batch normalization, and advanced optimizers like Adam made deep networks stable and efficient to train. Convolutional neural networks revolutionized computer vision, while Transformers transformed natural language processing. These innovations collectively made deep learning practical for real-world applications.

Benchmark Culture

Community benchmarks such as ImageNet for vision, GLUE for language understanding, and SQuAD for question answering allowed researchers to measure progress clearly. These benchmarks created a positive feedback loop: better results attracted more talent and funding, which fueled further breakthroughs. AlexNet’s success in 2012 is a famous example of how benchmarks accelerated progress.

Software Ecosystems

Frameworks like TensorFlow and PyTorch, along with open-source pre-trained models, made deep learning accessible to more people. These tools allowed researchers and engineers to experiment quickly, speeding up innovation.

Industry Incentives

Companies quickly realized that better AI models could improve products in search, advertising, speech recognition, and recommendations. This created strong incentives for investment in both talent and infrastructure, which further accelerated deep learning research and applications.

What Deep Learning Enabled

Deep learning led to rapid improvements in image recognition, speech processing, natural language understanding, and recommendation systems. It has enabled groundbreaking applications like ChatGPT for conversation, AlphaFold for predicting protein structures, and self-driving car vision systems. The technology is now widely adopted in both industry and research.

Limitations of Deep Learning

Despite its success, deep learning has limitations. Most models require large datasets and substantial compute resources. They can fail when encountering situations different from their training data. Many models lack transparent reasoning or causal understanding. Interpretability and safety remain challenges, and large-scale training raises ethical, privacy, and environmental concerns.

Researchers are exploring ways to combine deep learning with symbolic reasoning, causal modeling, and structured prior knowledge to overcome these limitations. Scaling alone is unlikely to produce human-like reasoning or common sense.

Conclusion

Deep learning succeeded where earlier AI approaches failed because multiple factors aligned simultaneously: abundant data, powerful compute, robust algorithms, benchmark-driven evaluation, reproducible software, and strong industrial incentives. This convergence allowed AI to generalize beyond narrow tasks and unlock practical applications across industries.

However, deep learning is not the end of AI. Limitations in reasoning, interpretability, and safety show that complementary approaches, including symbolic and causal methods, will likely play an essential role in the future. For anyone interested in AI, understanding these successes and limitations is key to navigating the rapidly evolving landscape of artificial intelligence.

References

Vaswani, A., et al. (2017). Attention Is All You Need.

Kaplan, J., et al. (2020). Scaling Laws for Neural Language Models.