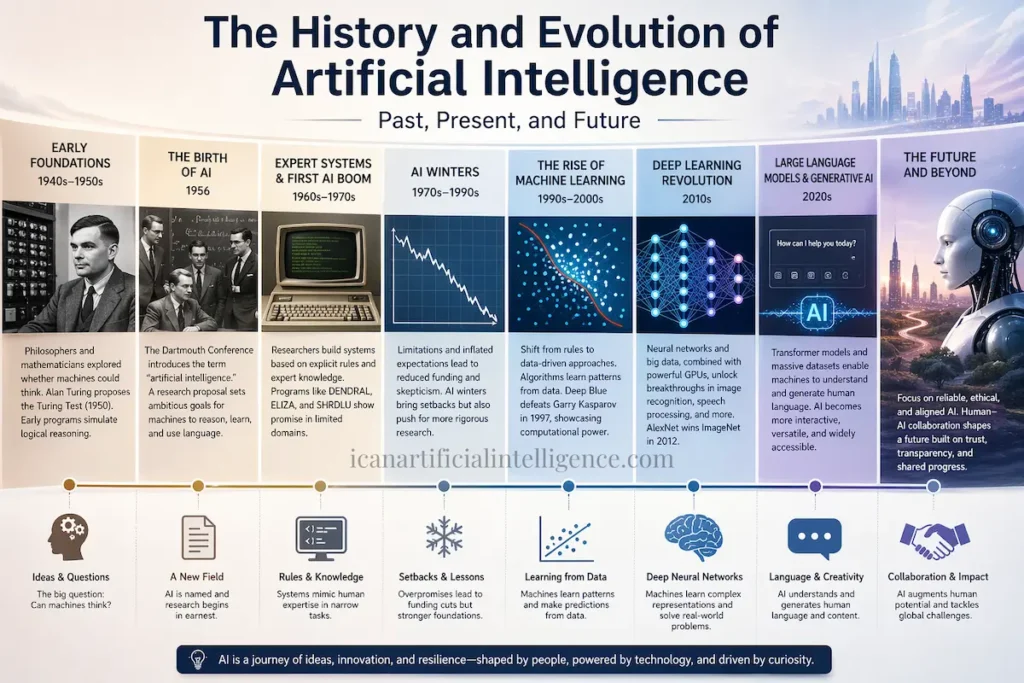

Artificial intelligence history did not appear overnight. Long before modern computers, philosophers and mathematicians were already asking whether machines could think. What began as abstract logic and symbolic reasoning has evolved into systems capable of understanding language, recognizing images, and generating content. This evolution reflects a blend of philosophical inquiry, mathematical theory, technological innovation, and relentless curiosity.

From a practical perspective, AI’s progress has never been linear. Periods of optimism have often been followed by skepticism and retrenchment. Understanding this historical rhythm is essential today, when AI systems appear increasingly capable and influential.

Today’s AI technologies build on decades of research, representing both incremental progress and occasional revolutionary shifts. From early conceptual foundations to modern language models, the history of artificial intelligence is rich with milestones that transformed what machines can do. Understanding this history not only clarifies how current systems function but also helps us evaluate future developments with a grounded perspective.

What Is Artificial Intelligence ?

At its most basic, artificial intelligence (AI) refers to the science and engineering of creating machines or computer programs capable of performing tasks that normally require human intelligence. This includes problem‑solving, pattern recognition, language comprehension, and decision‑making. AI is not magic—it relies on algorithms, data, and computational processes that follow well‑defined rules or learned patterns.

There are two broad conceptual types of AI:

1. Narrow (or Weak) AI

Narrow AI refers to systems designed for specific tasks, such as speech recognition, image classification, search engines, or recommendation systems. Nearly all AI systems in practical use today fall into this category. They operate within defined boundaries and do not possess general understanding or consciousness.

2. General Artificial Intelligence (AGI)

AGI refers to hypothetical systems with broad cognitive abilities comparable to human intelligence—capable of learning, reasoning, and adapting across domains. As of today, AGI remains a theoretical concept and has not been achieved.

This distinction matters because even advanced systems such as large language models are examples of narrow AI: powerful, flexible, and useful, but fundamentally limited to pattern recognition and probabilistic reasoning.

Early Foundations — Logic, Philosophy, and Computation (1940s–1950s)

The intellectual roots of AI lie in philosophy and mathematical logic. In 1950, British mathematician Alan Turing published his landmark paper Computing Machinery and Intelligence, introducing what later became known as the Turing Test. Rather than asking whether machines could think in an abstract sense, Turing proposed an operational criterion: if a machine could convincingly imitate human conversation, it could be considered intelligent.

Around the same period, early computer scientists such as Allen Newell, Herbert A. Simon, and Cliff Shaw developed programs like the Logic Theorist and General Problem Solver. These systems attempted to model human reasoning using symbolic logic, marking the beginning of what later came to be known as symbolic AI.

Arthur Samuel’s work on a self‑learning checkers program further demonstrated that machines could improve performance through experience, introducing early ideas that would later evolve into machine learning.

The Birth of AI as a Field (1956 Dartmouth Conference)

Artificial intelligence formally emerged as an academic field in 1956 at the Dartmouth Summer Research Project on Artificial Intelligence. Organized by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon, this workshop introduced the term artificial intelligence itself.

Importantly, there was no single “Dartmouth paper” presenting results. What exists is a research proposal, which outlined ambitious goals such as enabling machines to reason, learn, and use language. That proposal became influential not because of empirical findings, but because it framed AI as a legitimate scientific discipline worthy of sustained research.

The optimism of this era shaped early funding and expectations, many of which later proved overly ambitious.

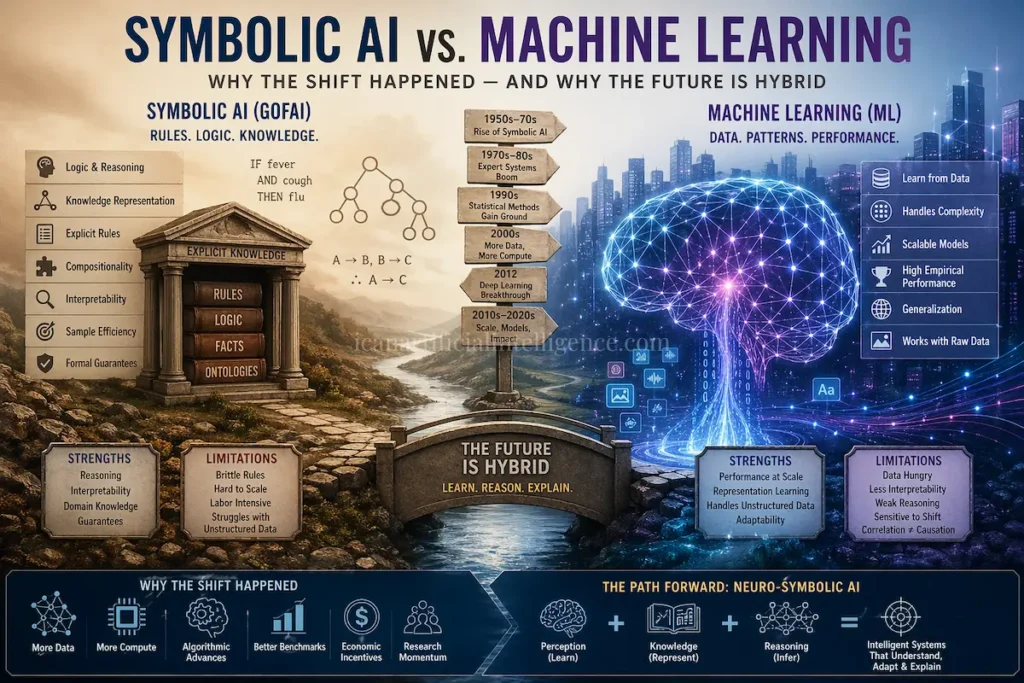

Expert Systems and the First AI Boom (1960s–1970s)

Following the Dartmouth conference, AI research focused heavily on symbolic systems built around explicit rules and expert knowledge. One of the most influential projects was DENDRAL, developed to assist chemists in identifying molecular structures.

Other notable systems included ELIZA, an early natural language processing program, and SHRDLU, which demonstrated limited language understanding in constrained environments. These systems showed promise but depended heavily on manually crafted rules and simplified domains.

Expert systems performed well within narrow contexts but struggled with ambiguity, scale, and real‑world complexity.

AI Winters — When Expectations Collapsed (1970s–1990s)

As limitations became apparent, funding agencies and governments grew skeptical. The result was a series of downturns known as AI winters, during which research funding and public enthusiasm declined sharply.

The first major AI winter occurred in the 1970s, followed by another in the late 1980s and early 1990s. These periods revealed a recurring pattern: ambitious claims outpaced technical feasibility.

From a long‑term perspective, AI winters were not failures but necessary corrections. They forced researchers to adopt more rigorous methods and realistic goals.

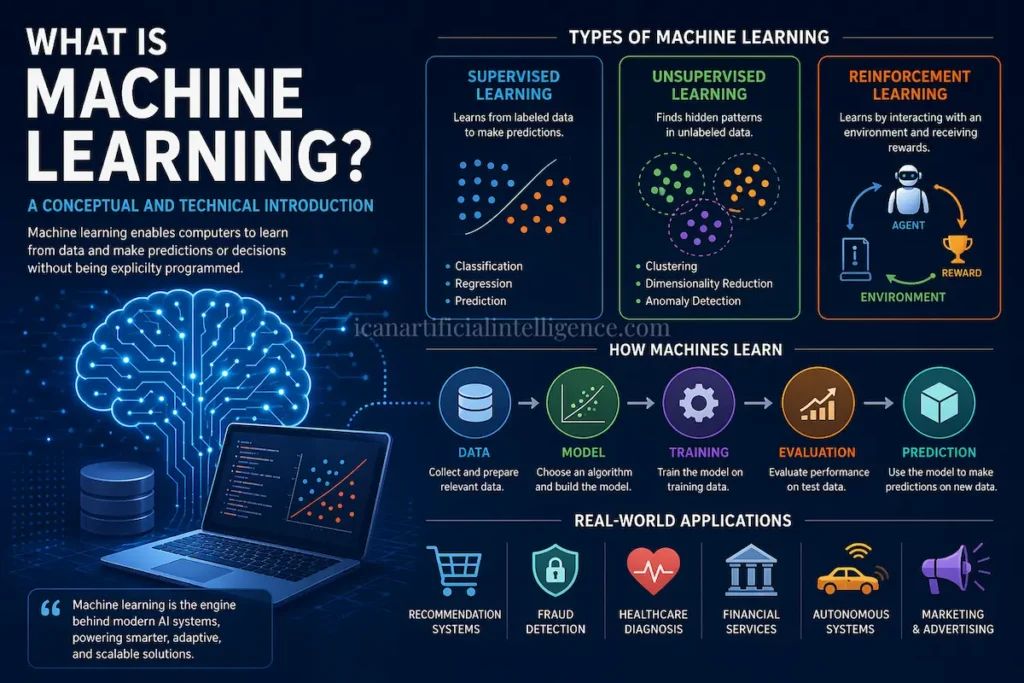

The Rise of Machine Learning (1990s–2000s)

By the 1990s, AI research shifted away from handcrafted rules toward statistical, data‑driven approaches. This transition marked the rise of modern machine learning.

Algorithms such as decision trees, support vector machines, and probabilistic models enabled systems to learn patterns from data rather than relying on explicit instructions. The emphasis moved toward generalization—the ability to perform well on unseen data.

IBM’s Deep Blue defeating chess champion Garry Kasparov in 1997 symbolized this shift, demonstrating the power of computational approaches applied to complex problem spaces.

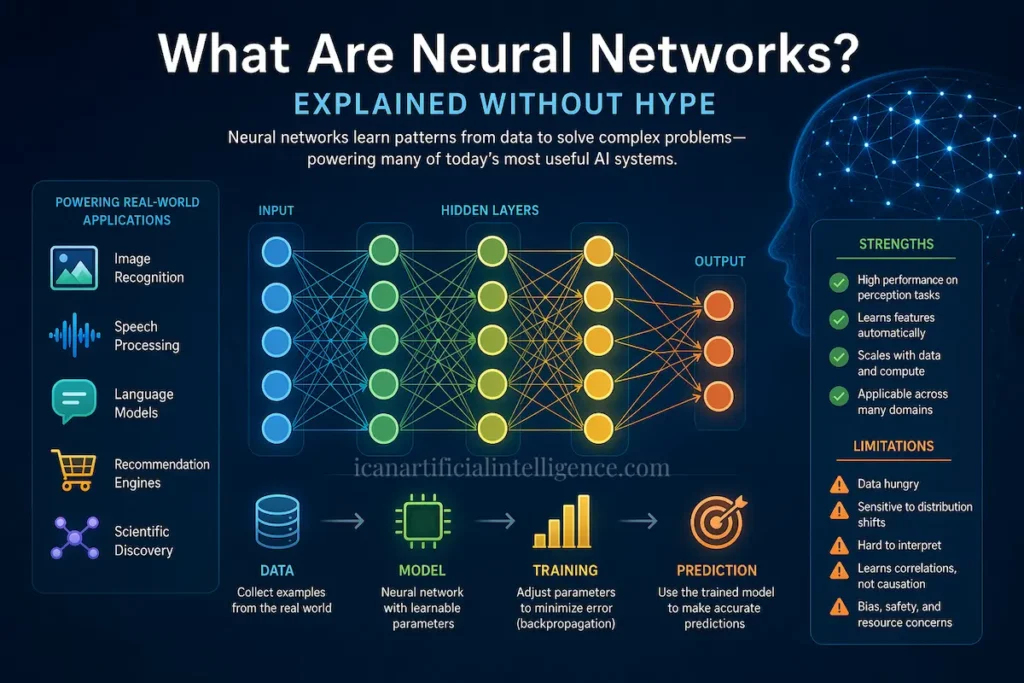

Deep Learning and Neural Networks (2010s)

The modern resurgence of AI is closely tied to deep learning. Advances in neural network architectures, combined with large datasets and powerful GPUs, made it possible to train models with many layers and parameters.

A key milestone occurred in 2012 when AlexNet dramatically outperformed competing models in the ImageNet image recognition challenge. This success demonstrated that deep neural networks could automatically learn useful feature representations at scale.

Deep learning soon transformed fields such as speech recognition, computer vision, medical imaging, and autonomous systems.

Large Language Models and Generative AI (2020s)

In the 2020s, large language models (LLMs) became one of the most visible outcomes of deep learning research. These models rely on transformer architectures, introduced in the 2017 paper Attention Is All You Need, which enabled efficient processing of sequential data.

LLMs are trained on massive text corpora and can generate coherent, contextually relevant language. While they remain narrow AI systems, they represent a qualitative shift in how humans interact with machines—moving from command‑based interfaces to conversational collaboration.

Why Understanding AI History Matters Today

Historical perspective helps temper unrealistic expectations and exaggerated fears. Many contemporary debates—about automation, job displacement, or machine autonomy—echo earlier discussions from previous AI cycles.

Understanding the technical and conceptual limits of past systems makes it easier to evaluate modern claims critically, rather than accepting them at face value.

Where Artificial Intelligence Is Headed

Current research focuses less on speculative intelligence and more on reliability, alignment, transparency, and human–AI collaboration. Ethical considerations such as bias, accountability, and governance are now central to AI development.

Rather than sudden breakthroughs, progress increasingly appears incremental, interdisciplinary, and constrained by social as well as technical factors.

Conclusion

Artificial intelligence is evolutionary rather than sudden. From early philosophical questions to symbolic reasoning systems, from statistical learning to deep neural networks, AI has advanced through cycles of ambition, limitation, and reinvention.

Understanding this history provides a grounded framework for assessing present‑day technologies and future possibilities—encouraging cautious optimism informed by technical reality rather than hype.

References & Further Reading

- Alan Turing, Computing Machinery and Intelligence (1950)

- Dartmouth Summer Research Project on Artificial Intelligence – Proposal (1956)

- Stanford Encyclopedia of Philosophy – Artificial Intelligence

- Encyclopedia Britannica – Artificial Intelligence

- IBM Research – History of AI

- Attention Is All You Need (Vaswani et al., 2017)

This article presents a historical and conceptual overview based on widely accepted academic and industry sources.