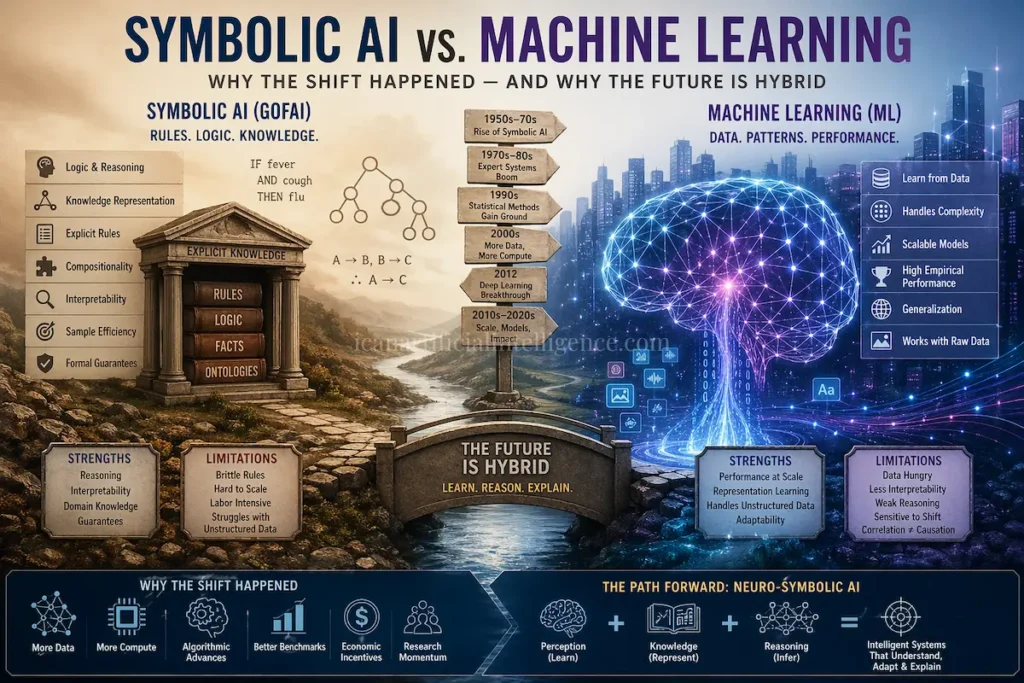

This article explains the historical, technical, and economic reasons the field of artificial intelligence (AI) shifted from a dominance of symbolic, rule-based methods often called Good Old-Fashioned AI (GOFAI) toward statistical and machine-learning approaches, particularly neural networks and deep learning. It reviews key milestones, strengths and limitations of each paradigm, the social and economic forces that drove the transition, and the current resurgence of hybrid (neuro-symbolic) approaches.

1. What We Mean by the Two Paradigms

Symbolic AI (GOFAI)

Approaches that represent knowledge explicitly using symbols, logic, and rules. Core techniques include logic programming (e.g., Prolog), production-rule systems and expert systems, semantic networks, frames, and symbolic planning systems.

Emphasis: explicit knowledge representation, logical inference, and hand-crafted rules.

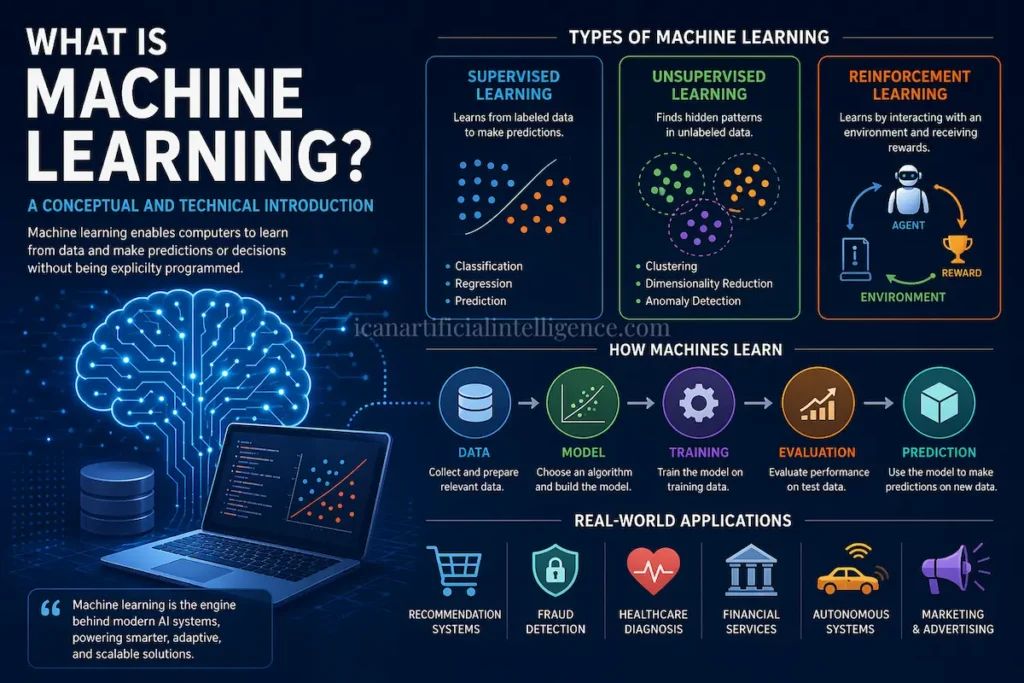

Machine Learning (ML) / Statistical AI

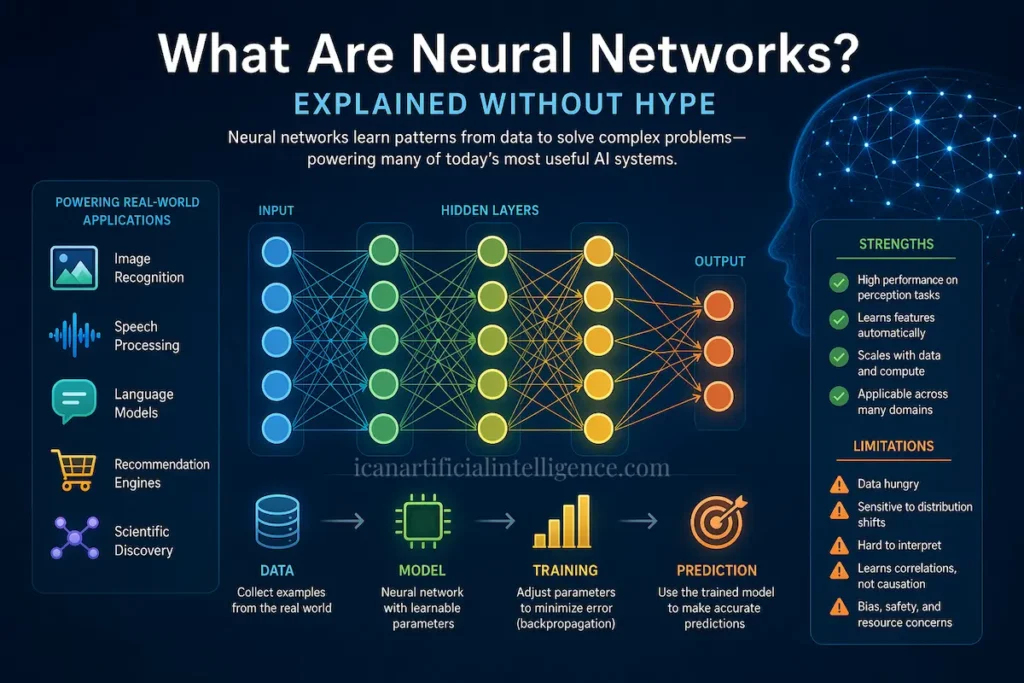

Approaches that learn patterns and functions from data using statistical and optimization methods. This category includes classical techniques (decision trees, support vector machines), probabilistic graphical models (e.g., Bayesian networks, HMMs), and neural networks especially modern deep learning systems trained on large datasets.

Emphasis: data-driven learning, generalization, and empirical performance.

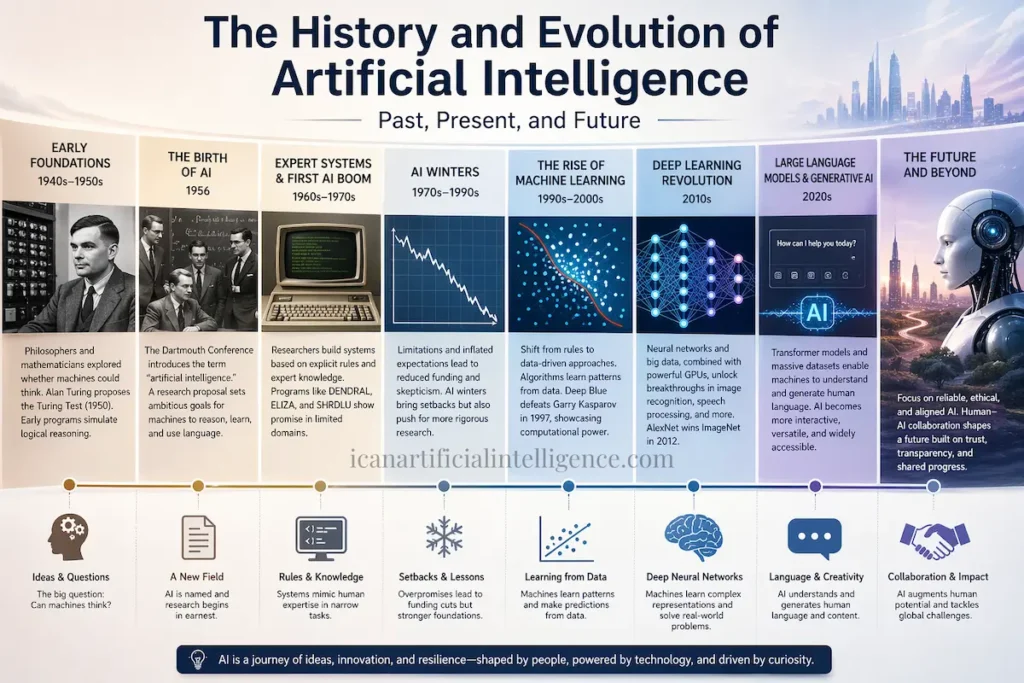

2. Short Historical Timeline (High Level)

- 1950s–1960s: Foundational symbolic work. Early programs such as the Logic Theorist and General Problem Solver, alongside the development of LISP and early work on knowledge representation, establish symbolic AI as the dominant paradigm.

- 1960s–1970s: Rapid growth of symbolic reasoning, logic, and planning. Early neural network research (the perceptron) also appears, but critical theoretical results by Minsky & Papert (1969) highlight limitations of single-layer perceptrons and slow neural research.

- 1970s–1980s: Expert systems (e.g., DENDRAL, MYCIN, later XCON) demonstrate real industrial value. Rule-based symbolic systems dominate many applied AI settings.

- Late 1980s–1990s: Statistical methods and probabilistic models (hidden Markov models, Bayesian networks) gain prominence. Overpromises and unmet expectations lead to funding contractions known as the “AI winters,” influenced by reports such as Lighthill (1973) and shifting government priorities.

- 1986 onward: The rediscovery and practical use of backpropagation revitalize neural network research. Progress is initially slow due to limited data and compute.

- 2012: Deep convolutional neural networks (AlexNet) achieve a dramatic breakthrough on the ImageNet benchmark, catalyzing large-scale industry investment in deep learning.

- 2010s–2020s: Rapid advances driven by scale (data and compute), improved architectures (CNNs, Transformers), and optimization techniques. Growing interest emerges in combining symbolic reasoning with learning in neuro-symbolic systems.

3. Why the Shift Happened — Core Reasons

1. Data Availability and Scale

- Symbolic systems rely on explicitly encoded rules and ontologies, typically authored by domain experts. This process is expensive and scales poorly.

- The rise of the web, digital sensors, and large-scale logging produced massive datasets. Supervised, self-supervised, and unsupervised learning methods thrive when data is abundant.

2. Compute and Hardware Advances

- GPUs, TPUs, and distributed systems made training large neural models feasible. As hardware improved, model capacity and achievable accuracy increased dramatically.

3. Algorithmic Progress

- Advances in architectures (CNNs, LSTMs, Transformers), optimization (Adam, better initialization, batch normalization), and regularization (dropout, data augmentation) significantly improved stability and generalization.

- Deep neural networks proved capable of learning hierarchical representations directly from raw data.

4. Empirical Metrics and Benchmarks

- ML systems began to outperform symbolic systems on widely accepted benchmarks (e.g., ImageNet, GLUE, SQuAD). Quantitative gains strongly influenced industry adoption and research funding.

5. Brittleness and Cost of Hand-Coded Rules

- Symbolic systems often fail when encountering unanticipated real-world variability.

- Covering the “long tail” of edge cases requires extensive manual effort.

- Rule-based systems struggle with noisy, ambiguous, or high-dimensional sensory inputs such as images, audio, and natural language.

6. Economic Incentives and Product Fit

- Commercial applications search ranking, recommender systems, ad targeting, speech recognition, and later large-scale language models benefited directly from statistical learning on large datasets.

- Investment followed technologies that delivered measurable business value.

7. Research and Community Momentum

- Successful ML results attracted talent, funding, and infrastructure, reinforcing a positive feedback loop that accelerated progress in data-driven approaches.

4. What Symbolic AI Was (and Still Is) Good At

- Explicit reasoning, compositionality, and interpretable decision traces.

- Encoding background knowledge, constraints, ontologies, and causal rules.

- Sample-efficient reasoning when domain structure is known and data is scarce.

- Providing formal guarantees in planning, verification, and safety-critical systems.

5. What ML / Deep Learning Excels At — and Where It Struggles

Strengths

- Processing high-dimensional sensory data (vision, speech, raw text).

- Learning representations with minimal manual feature engineering.

- Strong empirical performance on many benchmarks and real-world products.

Limitations

- Data hunger: large datasets or extensive pretraining are often required.

- Limited interpretability and lack of explicit reasoning traces.

- Weak systematic generalization and compositional reasoning in many settings.

- Sensitivity to distribution shifts and adversarial perturbations.

- Limited explicit causal understanding (correlation does not imply causation).

6. AI Winters and Pendulum Swings

- Overpromises followed by underdelivery led to funding cuts known as AI winters.

- Reports such as the Lighthill Report (1973) and changing DARPA priorities played significant roles.

- Each swing favored whichever paradigm demonstrated the most immediate practical return at the time.

7. The Rise of Hybrid and Neuro-Symbolic Approaches

- The shortcomings of purely symbolic and purely statistical systems motivated hybrid approaches.

- Neuro-symbolic AI aims to combine learning-based perception with symbolic reasoning, logic, and constraints.

- Examples include neural models augmented with symbolic planners, differentiable reasoning modules, knowledge graphs, and causal structures.

- This direction reflects a growing consensus that no single paradigm is sufficient for general intelligence or many safety-critical applications.

8. Consequences and the Current Landscape

- Machine learning especially deep learning dominates applied AI and commercial deployment.

- Symbolic methods remain essential in planning, verification, knowledge representation, and explainable systems.

- Modern AI research increasingly integrates statistics, logic, causality, and domain knowledge.

9. Practical Guidance: When to Use What

Use symbolic / rule-based systems when:

- Expert knowledge is compact, stable, and well specified.

- Interpretability, regulatory compliance, or formal guarantees are required.

- Data is scarce but domain expertise is strong.

Use ML / deep learning when:

- Large datasets exist (or effective pretraining is possible).

- The task involves perception or unstructured, high-dimensional data.

- Empirical performance is the primary objective.

Consider hybrid / neuro-symbolic systems when:

- You need both learned representations and explicit reasoning.

- Sample efficiency, interpretability, or causal reasoning is important.

- The problem spans perception, knowledge, and decision-making.

Conclusion

The shift from symbolic AI to machine learning didn’t happen because rules were “wrong” and data was “right.” It happened because the world turned out to be far messier, noisier, and larger than hand-crafted rules could handle. As data exploded and computing power grew, machine learning proved better at dealing with complexity, perception, and scale—delivering results that worked in real products and real markets.

At the same time, today’s AI systems reveal the limits of a purely data-driven approach. Deep learning excels at pattern recognition but often struggles with reasoning, explainability, and causal understanding—areas where symbolic AI has always been strong. This has led the field full circle, toward hybrid approaches that combine learning from data with explicit knowledge and logic.

The real lesson from AI’s history is not that one paradigm replaced the other, but that intelligence requires both. Symbolic reasoning provides structure and meaning; machine learning provides flexibility and adaptability. The future of AI lies in systems that can learn from data, reason with knowledge, and explain their decisions—bringing together the best of both worlds.

References

- Newell, A., Shaw, J. C., & Simon, H. A. (1956). The Logic Theory Machine: A Complex Information Processing System. IRE Transactions on Information Theory.

- McCarthy, J. (1959). Programs with Common Sense. In Proceedings of the Teddington Conference on the Mechanization of Thought Processes.

- Minsky, M., & Papert, S. (1969). Perceptrons. MIT Press. (Analysis of limitations of single-layer perceptrons.)

- Rosenblatt, F. (1958). The Perceptron: A probabilistic model for information storage and organization in the brain. Psychological Review, 65(6), 386–408.

- Shortliffe, E. H. (1976). MYCIN: A Rule-Based Consultation Program for Infectious Disease Diagnosis. PhD dissertation, Stanford University.

- Buchanan, B. G., & Shortliffe, E. H. (1984). Rule-Based Expert Systems: The MYCIN Experiments of the Stanford Heuristic Programming Project. Addison-Wesley.

- Lighthill, J. (1973). Artificial Intelligence: A General Survey. Science Research Council (UK).

- Rumelhart, D. E., Hinton, G. E., & Williams, R. J. (1986). Learning representations by back-propagating errors. Nature, 323, 533–536.

- Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). ImageNet classification with deep convolutional neural networks. Advances in Neural Information Processing Systems (NeurIPS).

- LeCun, Y., Bengio, Y., & Hinton, G. (2015). Deep learning. Nature, 521(7553), 436–444.

- Garcez, A. d’Avila, Lamb, L., & Gabbay, D. (2009). Neural-Symbolic Cognitive Reasoning. Springer.

- Besold, T. R., Garcez, A. d’Avila, Bader, S., et al. (2017–2018). Neuro-symbolic learning and reasoning: A survey and interpretation. arXiv preprint / AAAI Workshops.

- Pearl, J. (2000). Causality: Models, Reasoning, and Inference. Cambridge University Press.

- Marcus, G. (2018). Deep Learning: A Critical Appraisal. arXiv:1801.00631.